On the power of Open Source

A tale about Open Source, IBM, and XPM.

I often say: “Open Source is what brought me to IBM and Open Source is why I’m still at IBM today.” It’s true.

Open source at IBM

I’ve been fortunate enough to join a company that was one of the very first in the industry to understand the value of open source. Under the leadership of some brilliant executives, when others talked about open source as some communist endeavor to be frowned upon, IBM thought that if open source were to radically change the industry we would be better off being part of it than not. That’s how IBM went on to embrace open source. Of course, this didn’t happen overnight.

Wisely enough IBM executives tested out their belief starting with a few projects that looked promising enough and adopted progressively a stronger stance on open source as evidence that this was a winning proposition accumulated over time. Linux was one of those first projects along with Apache and Eclipse which came quickly after. These were followed by many others and over time doing open source became the norm.

When I joined IBM in the fall of 1999, that trend had already started and I fully benefited from it. I joined a small team in Cupertino to work on Xerces, the XML parser that IBM had contributed to the Apache Software Foundation (ASF). I spent my entire time working with the W3C community on developing specifications like XML and DOM and with the ASF community on developing Xerces. And on the weekend I got to go surfing! What was not to like? 😀

Since then I had the opportunity to work on many different open source projects such as Hyperledger and more recently OpenSSF.

XPM

Working on Xerces wasn’t my first experience in open source though. I actually started in open source before the term was even coined. Indeed, I made my first contribution to open source in 1990! I can’t really take full credit for this happening. As often in life this kind of stuff has at least as much to do with luck as anything else. I had just joined a small research team in France when one of my colleagues, Daniel Dardailler, told me that he and Colas Nahaboo had designed a simple format to store and display color icons on the X Window System for which he had quickly written a small piece of code to render them and that if I was interested it would be worth developing a more complete library for it.

I picked it up, rewrote the whole thing with a more robust parser and additional features, and distributed the first release of XPM2 on August 24th, 1990. Little did I know that I would end up maintaining this format and code for several years and that it would become a de facto standard for X. The last release I produced was on March 18th, 1998. (I still have all the code with the RCS archive and all!)

I now brag about it but I actually only started doing so a few years ago when I overheard someone talk about a person as “an open source dinosaur who started in open source in the early 2000s” That’s 10+ years later than when I started, I wonder what that makes me…

The power of Open Source

Besides the bragging rights I got from this what’s more important is that XPM is a good demonstration of the power of open source. Indeed, while I stopped maintaining the library others picked it up and it has been continuously maintained to this day. You can find it as part of the freedesktop gitlab repo.

Several releases have been produced to address security issues, memory leaks, as well as keeping the code to compile on newer systems. What better demonstration is there of the power of open source? It was still useful to someone else and because it was open source they could just pick it up and continue to maintain it. This obviously wouldn’t have been possible if it had been proprietary.

A wish

I wish vendors who stopped maintaining their software would release them in open source so that similarly to what happened with XPM others who still have an interest in the software could choose to maintain it if they want to. I have an old Garmin GPS marine unit with a proprietary memory card format for which one needs a proprietary reader to access the card from a PC via USB. The problem is that the only available driver for that card reader is for Windows 32bit!… I actually managed to use an old laptop that was collecting dust to access the card reader so I could update the firmware of my boat electronic devices but that’s absurd.

It’s ok with me that Garmin decides it’s not worth supporting my old hardware anymore but if they released the source code for the driver, users (a.k.a “the community”) could at least do it themselves and port it to newer systems. The frustration I get from this is probably not very different from what made Richard Stallman launch the Free Software movement when he couldn’t fix the buggy driver for his printer.

More on XPM

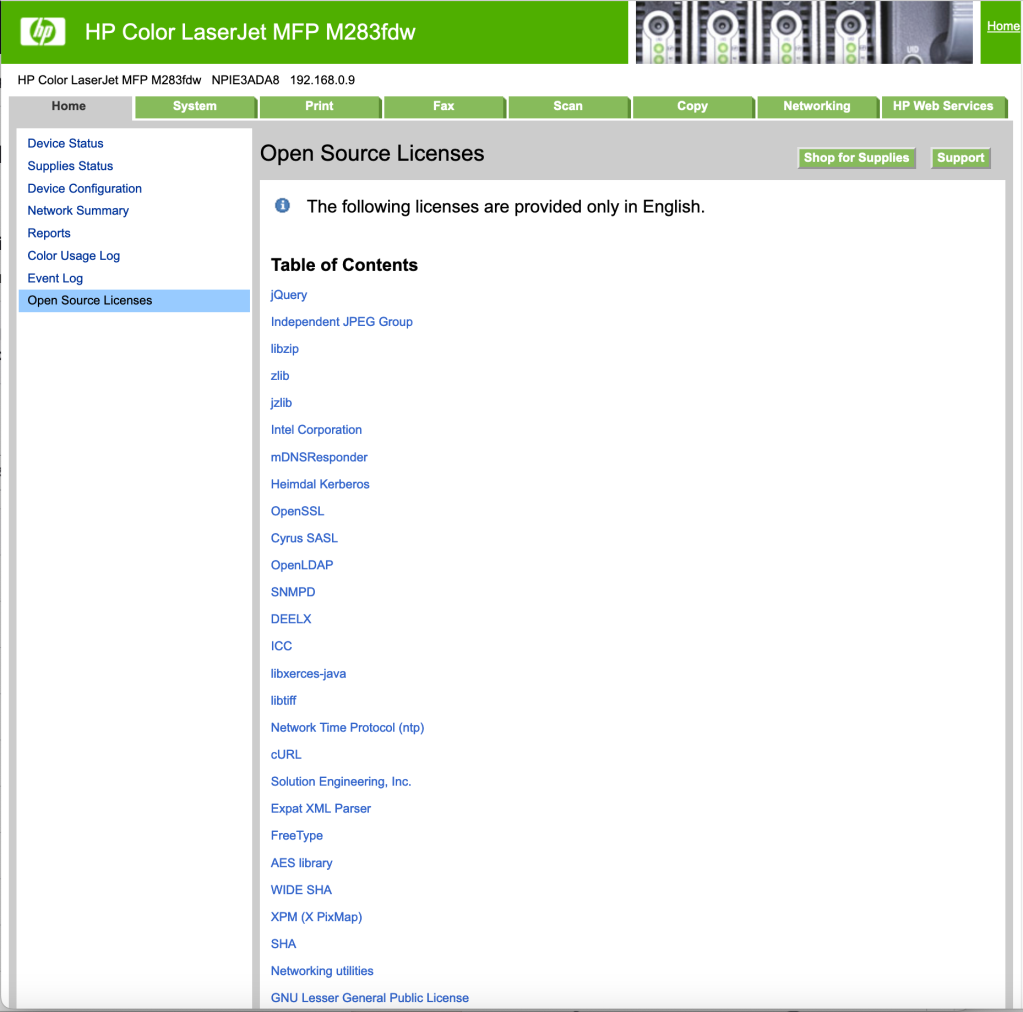

Speaking of printers… a couple of years ago, I noticed an entry “Open Source licenses” on my printer’s webpage. Always interested in open source I of course clicked on it to see what kind of software HP was using in that printer. Here is what appeared:

Do you see it? Close to the bottom. “XPM (X PixMap)”. Yup, that’s right. It turns out that my laser printer sitting a few feet away from my desk is using code I wrote some 30 years ago! How cool is that now? 😀

In case you wonder, it is probably used for the LCD screen which displays a bunch of icons:

Further reading

If you’re curious about XPM you can read more on the X PixMap wikipedia page.

There is also an interesting page on the story of Open Source @ IBM.

On resuming this blog

I stopped posting to this blog many years ago when my work shifted away from things I wanted to express myself on publicly and because in an effort to boost traffic to IBM’s various websites we were asked to post there rather than on our personal blog.

I can’t say that I’ve missed it much to be honest, writing doesn’t come naturally to me. I’m impressed by people who have managed to keep posting on a regular basis way passed the time when blogs were super popular and everybody “had to have” one, before microblogging killed most of that. In my case, posting requires some real effort and it was easier to let it fall off the side than not.

But every now and then the thought crosses my mind that what I’m thinking or talking about at that moment would probably be worth posting about. I’m not so arrogant as to believe the world necessarily cares about what I think but at the same time, having just turned 60* on March 1st, I have undeniably gained some experience that includes at least a few things of value that I hope can be of interest to others.

So I decided I should give a shot at posting again. This is by no means a commitment to posting regularly but I have several ideas I might post on. Stay tuned.

* Note: Turning 60 has been pretty uneventful for me. I unfortunately can’t say that the past 60 years have left my body unchanged but as one would expect aging is a gradual process and I felt no different on my 60th birthday than the day before. 🙂

Why I gave up on Box Wine

A few years ago I posted an article about how Box wine rules! in which I explained I had found box wine to be great for table wine because of its ability to keep from deteriorating the way wine does in an open bottle. Since then I’ve actually stopped buying box wine and it’s been on my mind for a long time to post about it to set the record straight.

While what I said about the advantage of box wine remains true I’ve stopped buying it for two reasons: 1) impact on the environment, 2) possible impact on my health.

Environment Impact

Unless you’ve been living under a rock you ought to be aware that plastics are a major problem for our planet, our oceans and marine life in particular. I’m not even going to bother talking about this in any details here but it is clear that we all ought to make an effort to reduce the use of plastics. Unfortunately box wine uses a plastic bag to store the wine, plus a valve to make it easy to pour, that will end up adding to the amount of plastic waste.

It’s always difficult to fully assess the environment impact of anything we do and I was thinking that the production of glass which is very energy intensive might not be any better for the environment because of its contribution to global warming but I have come to the conclusion that it’s still better than adding to the ever increasing global amount of plastic waste. Sadly, even though those plastic bags carry a logo that may make you believe they are recycled they typically aren’t! At least glass is if you dispose it properly. If only we could start reusing glass containers rather than merely recycling them the gain would be that much greater.

Health Issue

Then there is the possible health issue related to the wine being stored in a plastic bag. Plastics, especially the soft ones, aren’t stable. They leak chemicals into what they contain. If you have ever tried drinking from a plastic bottle of water left in the heat you’ve literally got a taste of that. Some plastics are better than others and you’ll find claim that no negative health impact has ever been linked to the use of those plastics used for food containment but the reverse is true as well: it has not been proven not to have any negative impact. As far as I’m concerned, call me chicken if you want but I’d rather not take the chance given that an alternative exists.

Conclusion

Sorry box wine, in the end, you lose. I’d rather waste a bit of wine if it comes to that and not take a chance with my health than add to the terrible impact on the environment and risk unknown consequences on my body.

More on Plastics

Since I read “Plastic Purge” from Mike Sanclements a few years ago I for one avoid using plastic containers for drinks and food as much as possible. And if I ever do I never put them in the microwave oven (like when you want to quickly reheat that leftover you stored in a plastic container). It’s actually not that hard to store your food in a glass container instead or at least transfer it to a plate (non-plastic obviously) before putting it into the oven. I highly recommend reading this book. It’s easy to read and very informative.

Hyperledger and Blockchain audio and video recordings

It’s been almost two years now that I started working on Blockchain and got involved in Hyperledger. It’s been an amazing ride.

I’ve got back to doing some coding (as time permits), to contribute to Hyperledger Fabric and to the Hyperledger Technical Steering Committee (TSC), on which I have the honor to sit as an elected member, and I spent a lot of time on the road speaking at various conferences, workshops, meetups, and customer briefings. Along the way I also got interviewed by journalists and several articles and videos can now be found on the net.

Below is a list of some of the recordings I think can be of interest:

November 1, 2016 San Francisco meet-up:

March 9, 2017 Online panel Blockchain Bytes: Blockchain Models (video)

March 28, 2017 The Blockchain Summit London:

- BLOCKCHAIN: Standardising to safeguard growth: advocating convergence during rapid evolution (audio)

April 5, 2017 JAX Conference London:

June 27-28 Money2020 Europe Copenhagen (videos):

- Key lessons learned from the IBM Blockchain pilot projects

- What programming languages are required for developing on Blockchain?

- How does IBM make Blockchain real for business?

- How to start developing on Blockchain?

- Blockchain: status quo in 2017

- Blockchain and the role of open source software

Bonus track: One of my favorites remains a press article in which I’m cited in a language I don’t speak! 🙂

That’s it for now, but the show continues and I very much look forward to it!

Working on the Hyperledger Project

At the beginning of this year I started working on the Hyperledger Project. This project is hosted by the Linux Foundation and aims at developing a framework for blockchain.

This is NOT a specific network, like Bitcoin, dedicated to financial transactions. It’s really a framework for people to run their own network(s) adapted to their specific application. The idea is that there isn’t going to be just one big blockchain network but rather many many such networks dedicated to various applications and involving a variety of players. For that reason the goal of the Hyperledger Project is to develop a very flexible framework that can be configured to meet the specific requirements of each application.

I actually knew very little about blockchain when I started and I still have a lot to learn but it’s been a lot of fun. My primary role is to help the project be successful as an Open Source project and guide the IBM development team in its transition from working on an internal project to working on an Open Source project. As time permits I also contribute and help with the development itself.

It’d been years since I did any production programming and it’s been a good opportunity to get a much needed refresher, getting to actually use many of the various tools that one typically uses for development nowadays. The first two weeks were rather humbling. Pretty much every step of the way I was stumbling on a piece of technology I had not used before. But I’m happy to say that I learned and was eventually able to start contributing to the project.

Back at the beginning of the year I felt the time was right for me to start on a new project and blockchain seemed interesting. After 6 months on the Hyperledger Project I can only say I’m glad I made that choice! Continue reading

Update on the W3C LDP specification

What just happened

The W3C LDP Working Group just published another Last Call draft of the Linked Data Platform (LDP) specification.

This specification was previously a Candidate Recommendation (CR) and this represents a step back – sort of.

Why it happened

The reason for going back to Last Call, which is before Candidate Recommendation on the W3C Recommendation track, is primarily because we are lacking implementations of the IndirectContainer.

Candidate Recommendation is a stage when implementers are asked to go ahead and implement the spec now considered stable, and report on their implementations. To exit CR and move to Proposed Recommendation (PR), which is when the W3C membership is asked to endorsed the spec as a W3C Recommendation/standard, every feature in the spec has to have two independent implementations.

Unfortunately, in this case, although most of the spec has been implemented by at least two different implementations (see the implementation report) IndirectContainer has not. Until this happens, the spec is stuck in CR.

We do have one implementation and one member of the WG said he plans to implement it too. However, he couldn’t commit to a specific timeline.

So, rather than taking the chance of having the spec stuck in CR for an indefinite amount of time the WG decided to republish the spec as a Last Call draft, marking IndirectContainer has a “feature at risk“.

What it means

When the Last Call period review ends in 3 weeks (on 7 October 2014) either we will have a second implementation of IndirectContainer and the spec will move to PR as is (skipping CR because we will then have two implementations of everything), or we will move IndirectContainer to a separate spec that can stay in CR until there are two implementations of it and move the remaining of the LDP spec to PR (skipping CR because we already have two implementations).

I said earlier publishing the LDP spec as a Last Call was a “step back – sort of” because it’s really just a technicality. As explained above, this actually ensures that, either way, we will be able to move to PR (skipping CR) in 3 weeks.

Bonus: Augmented JSON-LD support

When we started 2 years ago, the only the serialization format for RDF that was standard was RDF/XML. Many people disliked this format, which is arguably responsible for the initial lack of adoption of RDF, so the WG decided to require that all LDP servers support Turtle as a default serialization format – Turtle was in the process of becoming a standard. The WG got praised for this move which, at the time, seemed quite progressive.

Yet, a year and a half later, during which we saw the standardization of JSON-LD, requiring Turtle while leaving out JSON-LD no longer appeared so “bleeding edge”. At the LDP WG Face to Face meeting in Spring, I suggested we encourage support for JSON-LD by adding it as a “SHOULD”. The WG agreed. Some WG members would have liked to make it a MUST but this would have required going back to Last Call and as for one, as chair of the WG responsible for keeping the WG on track to deliver a standard on time, didn’t think this was reasonable.

Fast forward to September, we now found ourselves having to republish our spec as a Last Call draft anyway (because of the IndirectContainer situation). We seized the opportunity to increase support for JSON-LD by requiring LDP servers to support it (making it a MUST).

Please, send comments to public-ldp-comments@w3.org and implementation reports to public-ldp@w3.org.

Lesson learned

I wish we had marked IndirectContainer as a feature at risk when we moved to Candidate Recommendation back in June. Already then we knew we might not have enough implementations of it to move to PR. If we had marked it as a feature at risk we could now just go to PR without it and without any further delay.

This is something be remembered: when in doubt, just mark things “at risk”. There is really not much downside to it and it’s a good safety valve to have.

Box Wine Rules!

[ March 6, 2018 update: Also read Why I gave up on Box Wine ]

For once I’ll post on something that has nothing to do with my work. It’s also not that new news but I think I feel like adding my piece on this.

When my wife and I were on vacation last year we were given a box of wine – and I don’t mean a box of bottles of wine, but literally a box of wine, as in “box wine”. 🙂

It’s fair to say that we were pretty skeptical at first but we ended up agreeing that it was actually quite nice. Based on that experience we decided to try a few box wines back in California. We tried the Bota Box first and stuck with it for a while but we eventually grew tired of it and switched to Black Box which seemed significantly better. This has become our table wine. We’ve compared the Cabernet against the Shiraz and the Merlot and the Shiraz won everyone’s vote, although I like to change every now and then.

Last weekend I had some friends over for lunch and ended up with a bottle that was barely started. On my own for the week it took me several days to get to the bottom of it. All along I kept the bottle on the kitchen counter with just the cork on.

At the rate of about a glass a day I noticed the quality was clearly declining from one day to the next. Tonight, as I finally reached the bottom of the bottle I drank the last of it without much enjoyment. This is when I decided to get a bit more from the Black Box wine that had now been sitting on the counter for about two weeks.

Well, box wine is known to be good in that it stays better longer because the bag it’s stored in, within the box, deflates as you poor out wine without letting any air in. As a result the wine doesn’t deteriorate as fast.

If there was any doubt, tonight’s experience cleared it out for me. Although the wine from the bottle was initially of better quality than any box wine I’ve tried to date, after a week, it was not anywhere near as good.

I’ve actually found box wine to have several advantages. It’s said to be less environmentally unfriendly. (They say more environment friendly but with its plastic bag and valve it doesn’t quite measure up against drinking water. 😉 Because it can last much longer than an open bottle of wine you can have different ones “open” at the same time and enjoy some variety.

So, all I can say is: More power to box wine! 🙂

[Additional Note: This is not to say that one should give up on bottled wine of course. The better wines only come in bottles and I’ll still get those for the “special” days. But for table wine you use on a day to day basis box wine rules.]

Linked Data Platform Update

Since the launch of the W3C Linked Data Platform (LDP) WG in June last year, the WG has made a lot of progress.

It took a while for WG members to get to understand each other and sometimes it still feels like we don’t! But that’s what it takes to make a standard. You need to get people with very different backgrounds and expectations to come around and somehow find a happy medium that works for everyone.

One of the most difficult issues we had to deal with had to do with containers, their relationship to member resources and what to expect from the server when a container gets deleted. After investigating various possible paths the WG finally settled on a simple design that is probably not going to make everyone happy but that all WG members can live with. One reason for this is that it can possibly be grown into something more complex later on if we really want to. In some ways, we went full circle on that issue but in the process we have all gained a much greater understanding of what’s in the spec and why it is there so, this was by no means a useless exercise.

Per our charter, we’re to produce the Last Call specification this month. This is when the WG thinks it’s done – all issues are closed – and external parties are invited to comment on the spec (not to say that comments aren’t welcome all the time). I’m sorry to say that this isn’t going to happen this month. We just have too many issues still left open and the draft still has to incorporate some of the decisions that were made. This will need to be reviewed and may generate more issues. However, the WG is planning to meet face to face in June to tackle the remaining issues. If everything goes to plan this should allow us to produce our Last Call document by the end of June.

Anyone familiar with the standards development arena knows that one month behind is basically “on time”. 🙂

In the meantime, next week I will be at the WWW2013 conference where I will be presenting on LDP. It’s a good opportunity for people to come and learn about what’s in the spec if you don’t know yet! If you can’t make it to Rio, you’ll have another chance at the SemTech conf in June where I will be presenting on LDP as well. Jennifer Zaino from SemanticWeb.com wrote a nice piece based on an interview I gave her.

More on Linked Data and IBM

For those technically inclined, you can learn more about IBM’s interest in Linked Data as an application integration model and the kind of standard we’d like the W3C Linked Data Platform WG to develop by reading a paper I presented earlier this year at the WWW2012 Linked Data workshop titled: “Using read/write Linked Data for Application Integration — Towards a Linked Data Basic Profile”.

Here is the abstract:

Linked Data, as defined by Tim Berners-Lee’s 4 rules [1], has

enjoyed considerable well-publicized success as a technology for

publishing data in the World Wide Web [2]. The Rational group in

IBM has for several years been employing a read/write usage of

Linked Data as an architectural style for integrating a suite of

applications, and we have shipped commercial products using this

technology. We have found that this read/write usage of Linked

Data has helped us solve several perennial problems that we had

been unable to successfully solve with other application

integration architectural styles that we have explored in the past.

The applications we have integrated in IBM are primarily in the

domains of Application Lifecycle Management (ALM) and

Integration System Management (ISM), but we believe that our

experiences using read/write Linked Data to solve application

integration problems could be broadly relevant and applicable

within the IT industry.

This paper explains why Linked Data, which builds on the

existing World Wide Web infrastructure, presents some unique

characteristics, such as being distributed and scalable, that may

allow the industry to succeed where other application integration

approaches have failed. It discusses lessons we have learned along

the way and some of the challenges we have been facing in using

Linked Data to integrate enterprise applications.

Finally, we discuss several areas that could benefit from

additional standard work and discuss several commonly

applicable usage patterns along with proposals on how to address

them using the existing W3C standards in the form of a Linked

Data Basic Profile. This includes techniques applicable to clients

and servers that read and write linked data, a type of container

that allows new resources to be created using HTTP POST and

existing resources to be found using HTTP GET (analogous to

things like Atom Publishing Protocol (APP) [3]).

The full article can be found as a PDF file: Using read/write Linked Data for Application Integration — Towards a Linked Data Basic Profile

Linked Data

Several months ago I edited my “About” text on this blog to add that: “After several years focusing on strategic and policy issues related to open source and standards, including in the emerging markets, I am back to more technical work.”

One of the projects that I have been working on in this context is Linked Data.

It all started over a year ago when I learned from the IBM Rational team that Linked Data was the foundation of Open Services for Lifecycle Collaboration Lifecycle (OSLC) which Rational uses as their platform for application integration. The Rational team was very pleased with the direction they were on but reported challenges in using Linked Data. They were looking for help in addressing these.

Fundamentally, the crux of the challenges they faced came down to a lack of formal definition of Linked Data. There is plenty of documentation out there on Linked Data but not everyone has the same vision or definition. The W3C has a growing collection of standards related to the Semantic Web but not everyone agrees on how they should be used and combined, and which one applies to Linked Data.

The problem with how things stand isn’t so much that there isn’t a way to do something. The problem is rather that, more often than not, there are too many ways. This means users have to make choices all the time. This makes starting to use Linked Data difficult for beginners and it hinders interoperability because different users make different choices.

I organized a teleconference with the W3C Team in which we explained what IBM Rational was doing with Linked Data and the challenges they were facing. The W3C team was very receptive to what we had to say and offered to organize a workshop to discuss our issues and see who else would be interested.

The Linked Enterprise Data Patterns Workshop took place on December 6 and 7, 2011 and was well attended. After a day and a half of presentations and discussions the participants found themselves largely agreeing and unanimously concluded that: the W3C should create a Working Group to create a Recommendation that formally defines a “Linked Data Platform”.

The workshop was followed by a submission by IBM and others of the Linked Data Basic Profile and the launch by W3C of the Linked Data Platform (LDP) Working Group (WG) which I co-chair.

You can learn more about this effort and IBM’s position by reading the “IBM on the Linked Data Platform” interview the W3C posted on their website and reading the “IBM lends support for Linked Data standards through W3C group” article I published on the Rational blog.

On a personal level, I’ve known about the W3C Semantic Web activities since my days as a W3C Team Member but I had never had the opportunity to work in this space before so I’m very excited about this project. I’m also happy to be involved again with the W3C where I still count many friends. 🙂

I will try to post updates on this blog as the WG makes progress.